Google’s BigQuery is one of the most widely used data warehouse solutions in the world, with a market share of 13.21% in 2022 according to 6Sense. BigQuery enables organizations across the globe to efficiently store, organize, process, and analyze data. It provides two pricing models based on resource consumption (specifically, storage and compute capacity).

In this article, we cover only the analysis cost, so let’s start by clarifying how BigQuery charges for running queries.

BigQuery has two primary query analysis pricing models:

- On-demand pricing: The customer is charged for the bytes processed by a query. If the query fails, the customer is not charged. Customers are eligible for a complimentary 1 terabyte of query analysis per month.

- Flat-rate pricing: The customer purchases the capacities of virtual CPUs, known as slots, and pays a set fee regardless of the number of bytes processed. This option provides a fixed annual or monthly fee. You can choose whether to reserve slots for as little as one minute or on a month-to-month basis, or you can commit to a year. It’s essentially an all-you-can-query plan.

Recently, BigQuery has introduced a new flat-rate pricing model involving three separate editions. These are:

- Standard Edition, which provides the capacity for very basic SQL analysis.

- Enterprise Edition, providing advanced enterprise analytics functionality, 99.99% SLA, and BI acceleration features.

- Enterprise Plus Edition, adding region-level disaster recovery and compliance regimes for different regulations to the Enterprise Edition.

Commencing from July 5, 2023, BigQuery editions will completely substitute annual or monthly flat-rate pricing plans, as well as the Flex Slots pricing plan. Customers who are currently using these pricing plans can begin transitioning their capacity to the appropriate edition based on their specific business needs and, later, move to other edition tiers as their requirements evolve.

How Can You Know Which BigQuery Pricing Model Fits Your Needs?

How can you choose which plan works best for you? Let’s consider several scenarios:

- You have just started to use BigQuery. You’re not sure how many queries you will execute or how much you will spend.

- You have been using BigQuery for a considerable time, the amount of data stored in your warehouse is increasing, and more individuals are using it as the business aims to gain wider access to data. In order to manage the expenses, you intend to find a way to support this growth while keeping costs under control.

- You are seeking to merge multiple sources of siloed data into a single consolidated data source. You already have implemented Spark or Python-backed pipelines to support advanced analytics. As a number of different data sources is growing, it becomes harder to serve multiple vectors of business. Different workloads create extra complexity to serve service-level objectives.

You’ve just started using BigQuery

The on-demand pricing model is perfect to get started. For example, say you start building your data architecture with small data warehouses storing and processing insignificant amounts of data. The on-demand pricing model allows you to efficiently track compute resources consumption at low data volumes and keep an eye on all your cloud spending.

If you are following best practices for controlling costs, then most likely you will only be billed for what you are using. We strongly recommend setting up custom cost controls to limit the amount of data queried for any period of time that you can define.

You’ve been using BigQuery for a considerable period of time

As you use BigQuery, your costs constantly grow. If you’re using an on-demand pricing model, you should look into BigQuery reservations as a way to reduce costs.

BigQuery offers flat-rate pricing for customers who prefer a fixed cost for queries rather than paying the on-demand price per terabyte of data processed. You can also combine these two pricing models.

When you’re just getting started with BigQuery, you can use the Flex Slots commitment. With Flex Slots, you can rapidly increase or decrease the scale of your data warehouse, sometimes for as brief a period as 60 seconds. In other words, you can use the Flex Slots commitment for quickly handling a big data load inside the data warehouse on Black Friday or during some popular media event. However, the Flex Slots commitment will be decommissioned on July 5, 2023 so you will have to move to a corresponding BigQuery edition.

If you’re uncertain about committing to a long-term slot reservation for your workloads, Standard Edition will be a perfect substitute for Flex Slots. It will allow you to test a dedicated reservation for a brief period, helping you to determine whether a more extended slot commitment is suitable for your needs.

It’s worth noting that you can adopt a combination of pricing models to address various workloads. For example, if you have multiple workloads centered around BigQuery — such as data ingestion, ELT-style data transformation, and reporting or ad-hoc querying — you can choose different pricing models to optimize costs for each workload.

Lastly, suppose you have a good estimate of the amount of data that your ELT jobs will process on a daily basis. In that case, you may consider using on-demand pricing for ELT workloads, as you can predict the number of bytes processed. As a result, you can choose to execute your ELT workloads in a project that is not assigned to a reservation and instead utilize on-demand resources.

You’re merging multiple sources of siloed data into a single consolidated source

On top of the previously mentioned scenarios, your data warehouse has power users who are continuously querying your data and heavily ingesting new data in BigQuery. The more data you have, the more data pipelines are created. In case you are dealing with a great number of data pipelines, it may be a good time to choose a flat-rate pricing model.

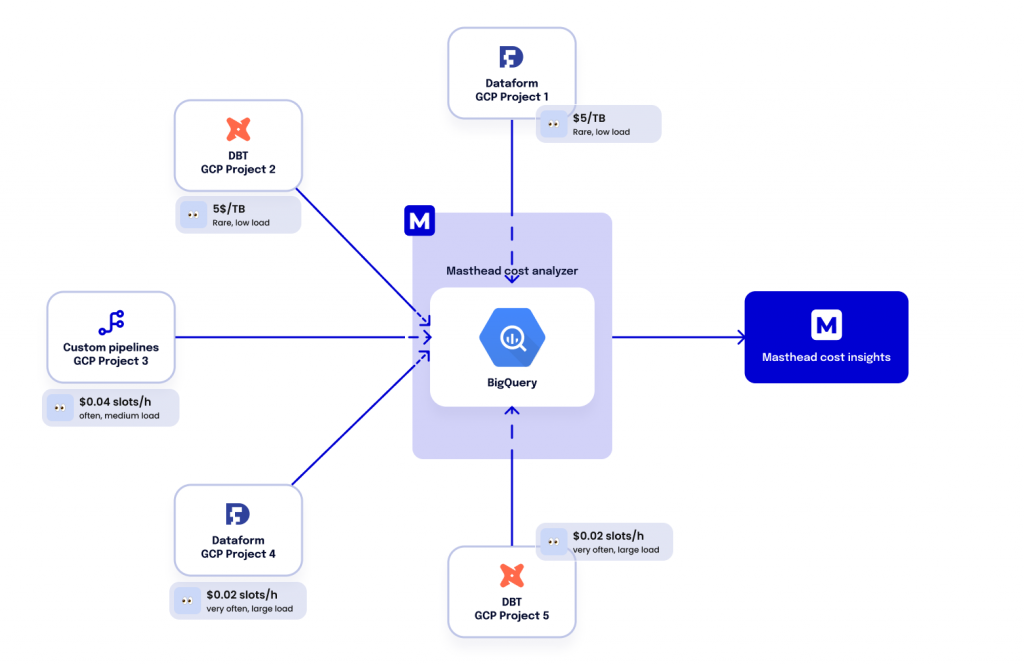

If your data science and advanced analytics tasks involve custom Python pipelines or Dataform jobs, you may be able to achieve cost savings by running those workloads in a Google Cloud project that is assigned a slot reservation.

But be careful! When one of our customers started using our new feature that monitors query insights, they observed that some daily scheduled queries were executing ten times longer than usual on a specific day, resulting in a delay in creating necessary tables and views. It turned out that a combination of other scheduled high-load queries were reserving slots for their execution and created a long queue of SQL queries, resulting in a longer than usual execution time.

Final thoughts

Once your infrastructure has reached a level of maturity that requires you to address the challenge of merging siloed data from multiple sources into a single consolidated source, you may need to use multiple pricing models to achieve cost efficiency and meet your objectives. These could include:

- BigQuery Reservations for workloads with SLAs that require guaranteed capacity and cost predictability

- On-demand for workloads where data processing volumes are predictable, with the per-byte-scanned pricing approach providing cost advantages since you only pay for the exact amount of data used

You can learn more about BigQuery pricing models on the Google Cloud website. Masthead has rolled out cost attribution functionality that shows how much every solution that you run in your cloud contributes to your cloud costs. Check out our changelog to know more about our cost attribution features.